If neural networks are to be used in a productive environment, they must be cast into a practical form and applied with the help of so-called inference. But first:

How do you recognise a drive shaft?

The shape? – There are different forms.

The material? – Countless parts of a car body are made of metal.

The surroundings? There are also said to have been drive shafts on the greenfield site.

Of course, as humans, we can easily recognise a drive shaft, whether we encounter it in the plant, in a meadow or half-hidden under a stone. Our human intelligence enables us to abstract from the concept of “drive shaft” and reliably recognise the object.

Artificial intelligence – and thus neural networks in many complex cases – cannot do this. Once learned, these concepts can only reliably recognise a fixed learned set of objects or people. Although the networks can be very successful in their tasks, they are not particularly versatile in practice.

But how are neural networks and AI used in the first place? And what is inference all about?

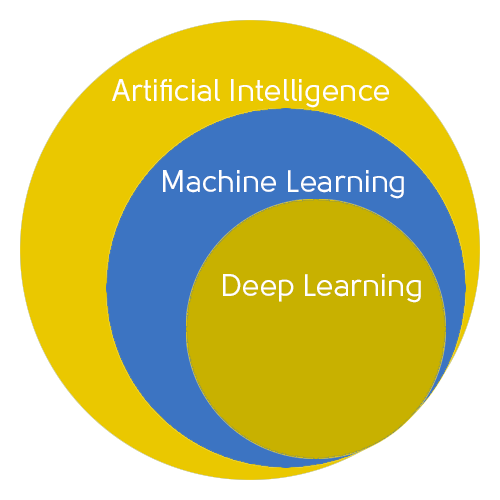

Different forms of artificial intelligence

The concept of artificial intelligence is not new. As early as 1956, the scientific term was coined in a conference at Dartmouth College in the USA. And although the first automatons date back thousands of years, this was the cornerstone of the institutionalised development of artificial intelligence as we understand it today. Behind this is the attempt to imitate human intelligence with the help of machines and computers. The underlying algorithms are correspondingly elaborate and complex.

But their problem-solving skills were often still limited – which is why so-called deep learning with the help of neural networks came into being. The parameterisation and weighting of countless data points creates a dense, confusing network of connections that resemble human neurons in the brain.

These advanced algorithms are particularly in demand in industrial image processing: they help to tame and understand the flood of information from ever higher resolution images.

The complexity of neural networks

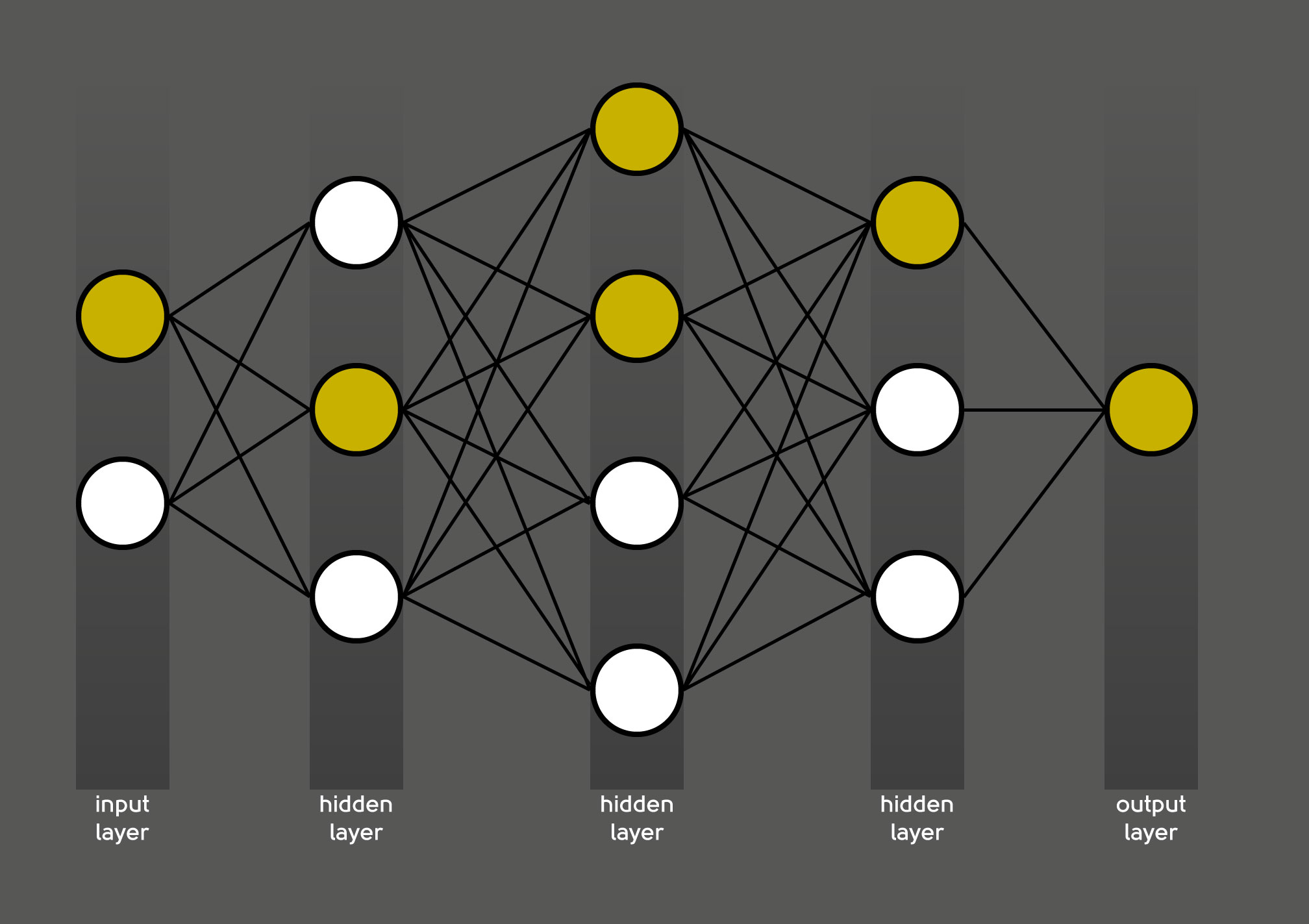

It is precisely this data density that makes neural networks no longer firmly programmable. In order to cope with this quantity, neural networks introduce so-called “hidden layers” into the calculation. Each layer takes over the recognition of a multitude of individual features, which only produce the overall result in their entirety.

Neural networks weight the dependence of these characteristics on each other. This is how the technical term “weighting” comes about – these sometimes important, sometimes almost insignificant connections can be compared to the synapses in the brain. Important nerve pathways are more pronounced, rarely used pathways are correspondingly weighted less.

Training prepares the neural networks for their specific task

However, neural networks need training to correctly build up this complex web of information evaluation. This is because, unlike their human counterparts, they do not readily recognise a drive shaft.

In order to correctly fulfil their intended task, the neural networks are trained with data of the respective object type. If drive shafts are to be recognised, the algorithm requires labelled images of different specimens. In an extensive training process, the network then learns a unique combination in order to derive a correct result from the countless parameters and weightings.

Especially at the beginning of the training, the network’s conclusions may be wrong – it then starts again independently in a new constellation and calculates a new result. This process often takes several hours. The training of neural networks is therefore comparably complex and computationally intensive.

Neural network inference

In practical use – for example in the production hall, where drive shafts are to be automatically distinguished from camshafts and broomsticks – such a trained neural network can be impractical and difficult to handle. Extensive files that draw lots of computing power on the factory floor and thus cause delays? No longer conceivable in today’s automated production!

For this reason, a distinction is made between training and inference of neural networks.

Inference stands for the productive use of a neural network – it calculates the desired result, e.g. a score, a segmentation or a classification. The name says it all: according to verb infer, the inference concludes the result of a new object of the same class based on the individual adjustments in the previous training. Successful training that avoids overfitting, for example, allows the inference to always draw the correct conclusions about these new objects.

Use neural networks for visual quality inspection of metallic and complex workpieces

Inference requirements

For this reason, inference is not conceivable without previous training. In a sense, it is the practical implementation of what has been learned. It does not only focus on the correct result, but also includes practical factors:

- Neural networks in image processing and natural language processing are often very large and complex. It is not uncommon for there to be dozens to hundreds of hidden layers. The weighted connections between them can quickly run into the millions. Using such a network in productive operation means long computing times and therefore long downtimes.

- The larger the neural network, the more CPU, memory and energy it needs to come to a result at all. The programme runtime is correspondingly longer – the latency as well as the energy consumption increase immeasurably.

- Not every network works with raw data. It can often be useful to post-process the incoming data before it enters the AI: In image processing, this can be brightening or cropping a certain section of the image. This also requires adaptation.

- What are the results of the neural network to be used for afterwards? Format changes and adaptations to an API may be necessary.

- Of course, the neural networks should be used in the long term. Therefore, it is crucial to create options for updates right at the beginning.

- The same applies to entrepreneurial growth: How can the system be optimally scaled?

All these specifications and questions have to be taken into account for inference to work excellently. It turns out: the requirements for an application of neural networks are increasing.

For this reason, too, there is currently an increasing separation between training and inference of neural networks. This also affects the job description of the machine learning engineer: In addition to the actual developers of the networks, there are more and more experts who dedicate themselves to the implementation in a productive environment. These can take care purely of inference or – comparable to DevOps – implement a lean combination in the sense of MLOps.

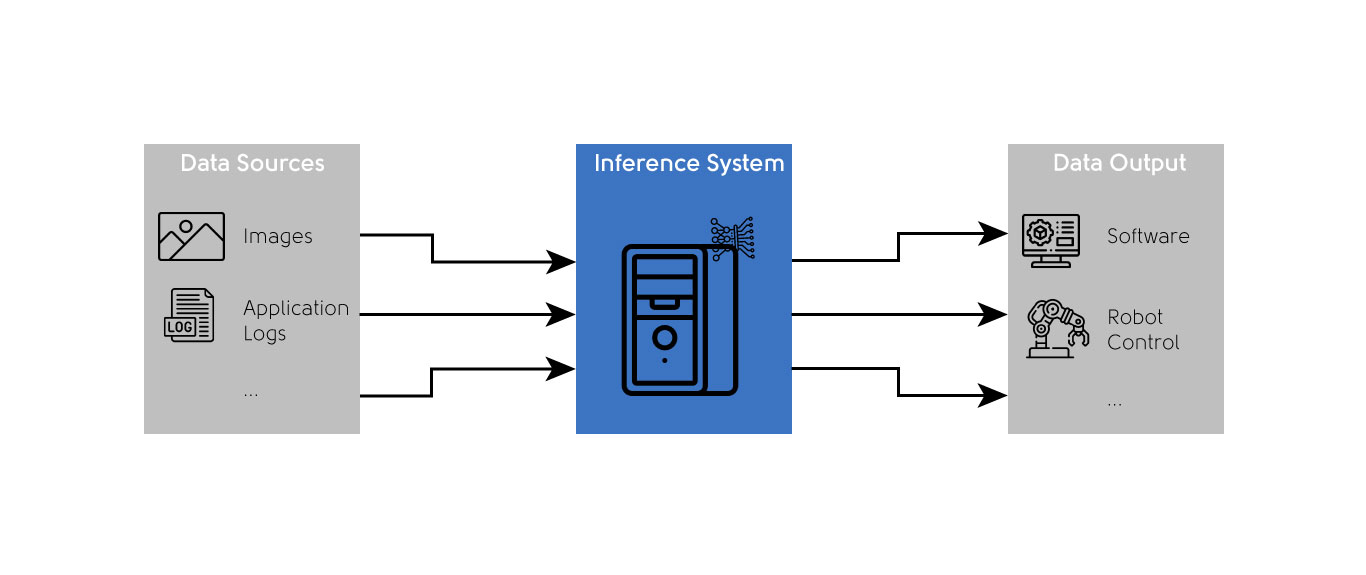

Regardless of how the person behind it is set up operationally, however, they take care of the optimal dovetailing of the neural network with the entire production process:

First, usable data of sufficient quality must be drawn from various sources in production and possibly processed. Only then can the system calculate the actual inference – and then pass on the data, which may have been reprocessed, to the next production station in a timely manner.

Inference optimisation methods

In order to optimally implement all these requirements in inference, machine learning engineers use both classical methods of software optimisation and methods specifically tailored to neural networks. The two most important of these are:

- Pruning: In the training process of a network, individual layers are repeatedly switched off in order to obtain a more reliable result overall. If these so-called drop-out layers can remain switched off in the final training result, they can also be deleted for inference. The network becomes smaller and thus better performing.

- Quantisation: Just as photos on the internet are usually only displayed as compressed .jpg images, neural networks can also be compressed for their actual use. The inference behaves similarly to the photo – hardly any differences are visible to the naked eye, but the file size is drastically reduced. In practice, a neural network would still reliably detect drive shafts.

This allows the inference to be extensively optimised and adapted for use in the production hall. Thus, nothing stands in the way of an intelligent drive shaft detection.

Use neural networks for visual quality inspection of metallic and complex workpieces