Our software expert Silvan Lindner explains how writing and designing software has changed over the years and to what extent these changes ease life for developers.

Software development requires various tools that convert code into an executable program, the so-called toolchain. For a software company, the most important thing is that the programs created are always generated identically (repeatable, reproducible, standardized) – regardless of the computer on which the code is processed. For this, the build system and the target architecture must also be uniquely identified. The build system describes the environment in which the toolchain operates: i.e. compiler, compiler version, operating system, versions of the dependencies and tools. In the following, we show such a standardized process.

How a program is created

A source code defines a program. But only compiler makes it possible to translate this into an executable application. In the simplest case, with only one source file, a compiler can be called for example directly by “clang++ main.cpp”. There are a multiplicity of further options, which serve additionally as instructions, like the inclusion of Third Party Libraries or additional Compiler flags. As the project grows, the complexity of these manual compiler calls increases immensely.

To alleviate this complexity, the software Make was first developed. This allows the creation of “recipes”, so-called Make files, which define the build process in simple syntax and automatically generate compiler instructions. However, a Make file consists at some point of so many libraries, tools and text that even this becomes confusing.

To solve this problem, the Build System Generator CMake was created about 15 years ago. The idea behind the CMake file is similar to Make, but on a much higher level. This simplifies the creation of new projects and makes them much clearer. Additional functions are easily integrated into the build process. Companies use CMake additionally to encrypt projects for deployment and to create installers.

Dependencies and build environments

A library of additional functionality is called dependencies. These do not have to be implemented by the user. They include for example

- Code collections, how the system processes point clouds or images and their graphical representation,

- tools for logging, data conversion and file import/export,

- camera and other APIs,

- custom developments that can be reused in other projects.

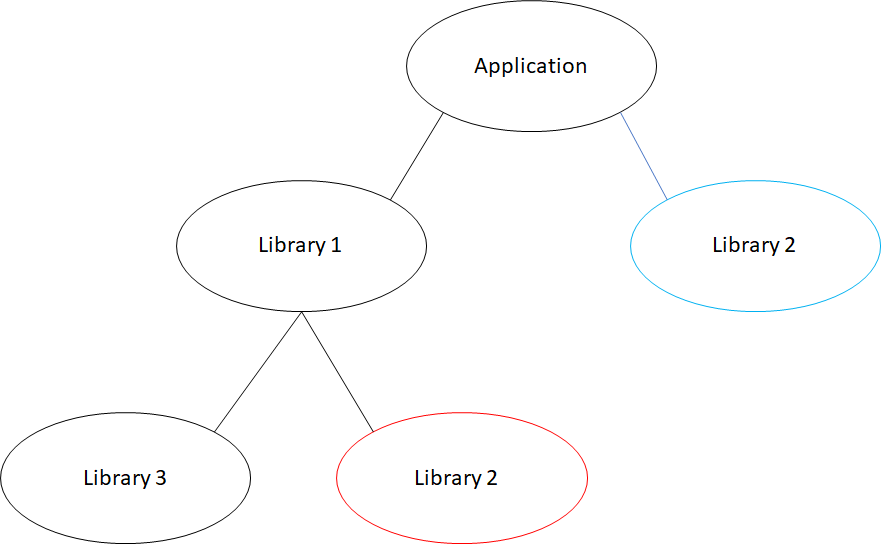

Each of these must be compiled separately before a programmer adds them to a project. However, managing these dependencies sometimes proves difficult. It is possible to visualize all libraries of a project by a tree structure. Here it may happen that the same dependency appears more than once in this tree. For example, if an application depends on Library 1 and Library 2 and Library 1 also depends on Library 3 and Library 2, it is possible that Library 2 exists in different versions. This often leads to the program no longer working or only working incorrectly.

A file that makes life easier

If this is the case, all libraries must be rebuilt to depend on the same version. Manually, this is difficult and very time-consuming. To get around this manual change, experts use a Conan file. Conan is a package management software. It handles dependencies, build environments and versioning. This ensures that all dependencies (versions, sub-dependencies and options) and build environments (compilers, compiler options, operating systems and architecture) are consistent. Artifactory can be used as a Conan remote. Here are all Conan recipes stored, by which it is possible to search for libraries. Thus, no library exists any more, which depends on an outdated version of another library.

Other helpful tools

In order to solve problems efficiently, versioning control is indispensable. For this purpose, programmers use Git versioning software, for example. With Git, developers track changes in the code, implement them cleanly in the client software, find errors better and can undo revisions if necessary. It also allows multiple programmers to evaluate changes and release them only after a review. Through Git, a company thus has an overview of all programmers’ suggestions for improvement and their adjustments.

To ensure that a program is ready for delivery, the concept of continuous integration is used. Among other things, this can be realized with the help of Jenkins. In this process, different steps are carried out to build and test the program. Only if no error occurs in the process, a product is ready for release. These steps look mostly as follows:

- rebuild the program completely on a separate computer,

- run tests to verify the functionality of the software,

- build installer,

- place installer on a server with customer access.

If an error occurs in any stage, the Jenkins pipeline immediately terminates. The point at which an error was detected is visualized in the process. This way, the developer has direct feedback and can fix the problem. Every time a change is made in Git, the program runs through again. When Jenkins finds no more errors – everything is highlighted in green – the finished product can be delivered to the customer.